Amazon S3 Data Source

The Amazon S3 Data Source indexes documents and objects from an Amazon S3 bucket into a SearchBlox collection, making the S3 content searchable using SearchBlox.

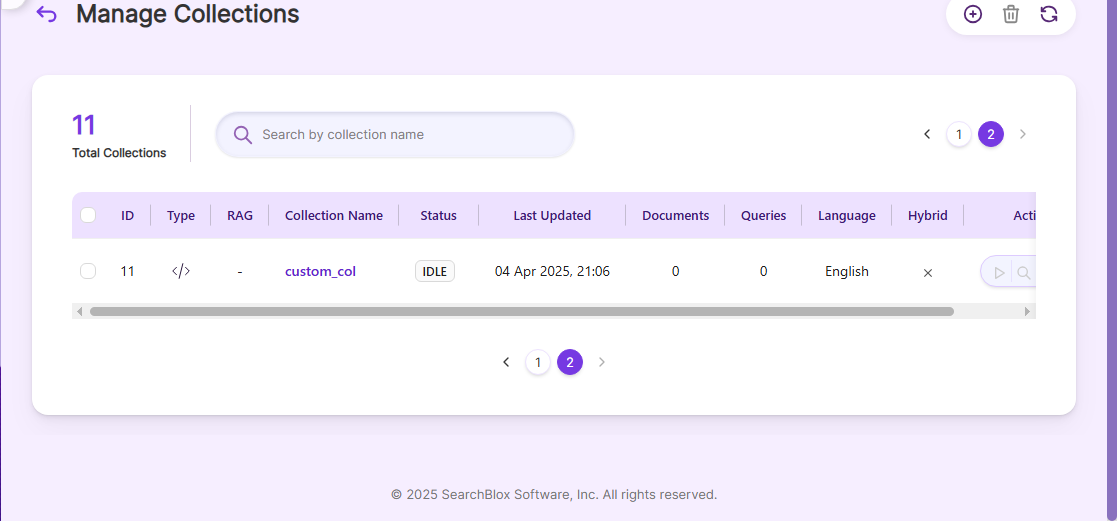

Configuring SearchBlox

Before using the Amazon S3 Data Source, make sure SearchBlox is installed properly and create a Custom Collection.

Configuration details of Amazon S3 Data Source**

| encrypted-ak | Encrypted Amazon S3 access key (encrypter available in the downloaded archive). |

| encrypted-sk | Encrypted Amazon S3 secret key (encrypter available in the downloaded archive). |

| region | Region of your Amazon S3 instance. |

| data-directory | Folder where data will be stored. Make sure it has write permission. |

| api-key | SearchBlox API Key |

| colname | Name of the custom collection in SearchBlox. |

| queuename | Amazon S3 SQS parameter, required for updating documents after indexing. |

| private-buckets | Set to true to index content from private buckets. |

| public-buckets | Set to true to index content from public buckets. |

| url | SearchBlox URL |

| includebucket | List of S3 buckets to include. |

| include-formats | File formats to include. |

| expiring-url | Expiring URLs in search results (default is expiring URL). |

| expire-time | Expire time for URLs (default is 300 minutes). |

| permanent-url: | Permanent URLs in search results (expiring URLs are preferred if both exist). |

| max-folder-size | Maximum size of a folder (in MB) before it is swept.. |

| servlet url & delete-api-url: | Make sure the port number is correct; for example, use 8443 if SearchBlox runs on port 8443. |

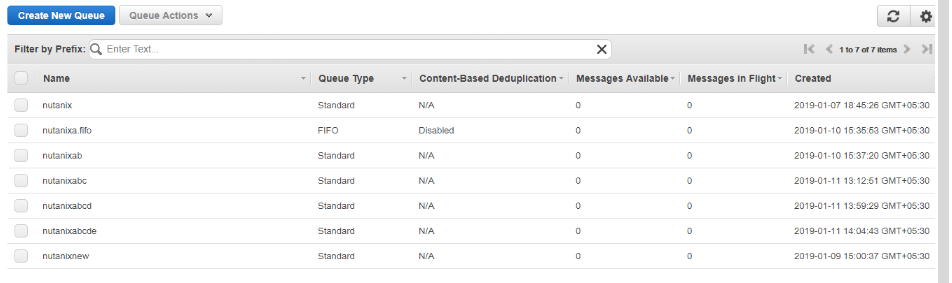

Steps to trigger notification when a document is updated in S3

- In Amazon S3 console, go to Services → Simple Queue Service (SQS) under Application Integration.

- Click Create New Queue, give a name, and configure the queue.

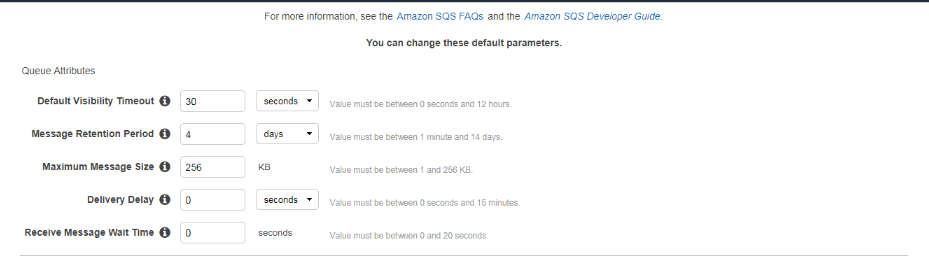

- Set Message Visibility Timeout, Retention Days, and Receive Message Wait Time.

- Set Receive Message Wait Time between 1–20 seconds to enable long polling.

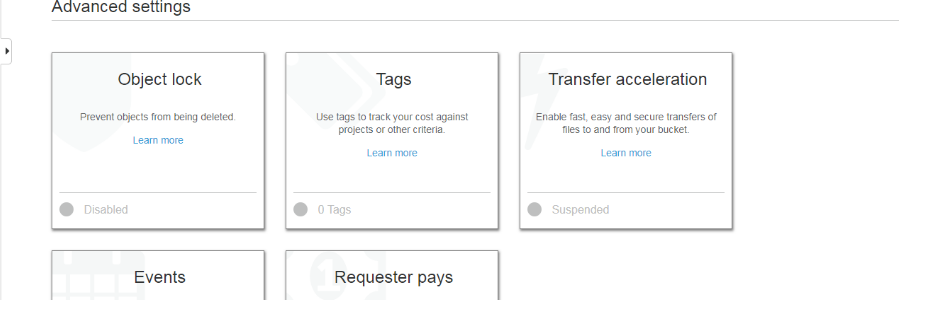

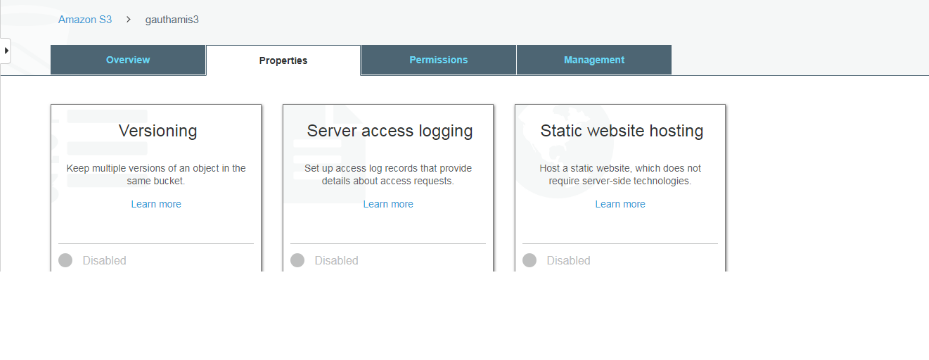

- In the S3 console, select the bucket for which you want SQS, go to Properties → Advanced Settings → Events.

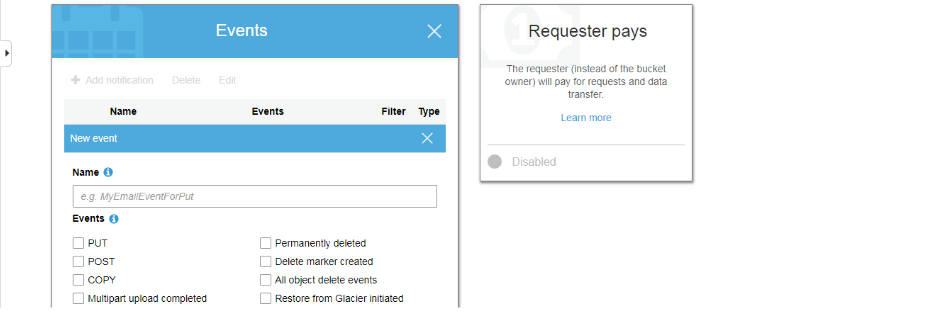

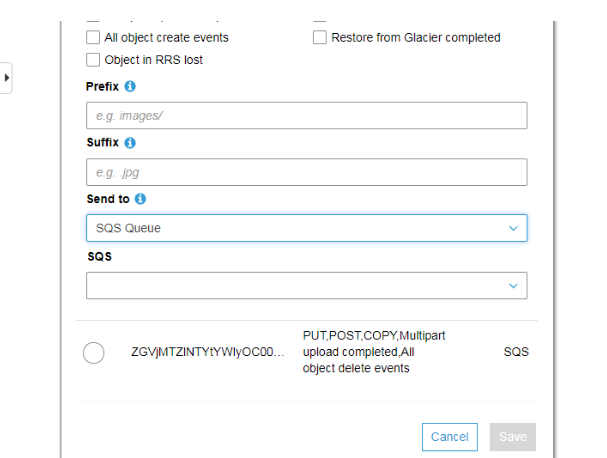

- Click Add Notification, give a name, and select the events you want notifications https://files.readme.io/e00dec7-4.png

- Under Send To, select SQS Queue, enter the name of the queue created earlier, and click Save.

-

Now, whenever a document in the bucket changes, a notification will be sent to SQS.

-

Provide the queue names in the S3 connector YAML file to trigger indexing whenever a document is updated.

Updated 2 months ago