Dynamic Content Collection

SearchBlox can index web content generated dynamically using JavaScript or applications (Single Page Applications SPAs). Dynamic Content Collection indexing is slower than that of HTTP collection due to the dynamic rendering of the pages.

This is an example of dynamically generated content:

Note

- Dynamic Content Collection is recommended only for content generated using JavaScript (dynamically generated).

- Use HTTP Collection for static web content.

- Most of the settings for HTTP Collection will not be available for Dynamic Content Collection.

Prerequisites

- Chrome browser has to be installed on the system to use Dynamic Content Collection.

- Chrome browser version must be 83 or higher.

- Chrome browser version and the Chrome driver version found in the filepath:

<SearchBlox -Installation-Path>\webapps\searchblox\driversmust match.

Installation

Windows

- Download the required driver from here.

- Rename the downloaded file to chromedriver_win.exe and place it in

C:\SearchBloxServer\webapps\searchblox\drivers

Linux

- Download the required driver from here.

- Rename the downloaded file to chromedriver_linux and place the application in

opt/searchblox/webapps/searchblox/drivers - Provide SearchBlox user permissions using the following commands.

chown -R searchblox:searchblox chromedriver_linux

chmod -R 755 chromedriver_linux

- Install Headless Chrome by running the following commands.

wget https://dl.google.com/linux/direct/google-chrome-stable_current_x86_64.rpm

sudo yum install ./google-chrome-stable_current_*.rpm

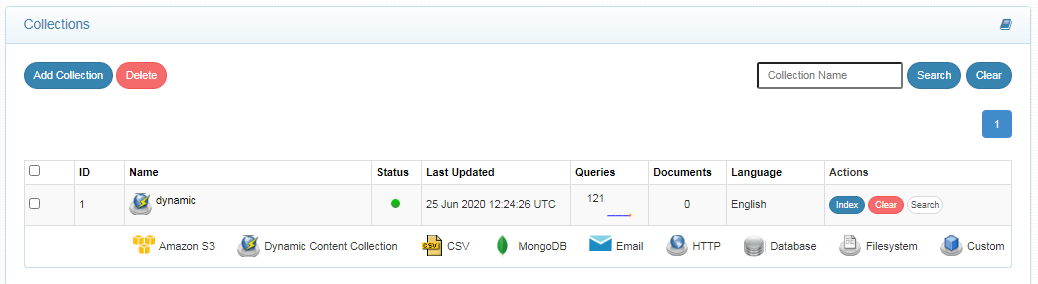

Creating Dynamic Content Collection

- After logging in to the Admin Console, click Add Collection button.

- Enter a unique Collection name for the data source.

- Choose Dynamic Content Collection as Collection Type.

- Choose the language of the content (if the language is other than English).

- Click Add to create the collection.

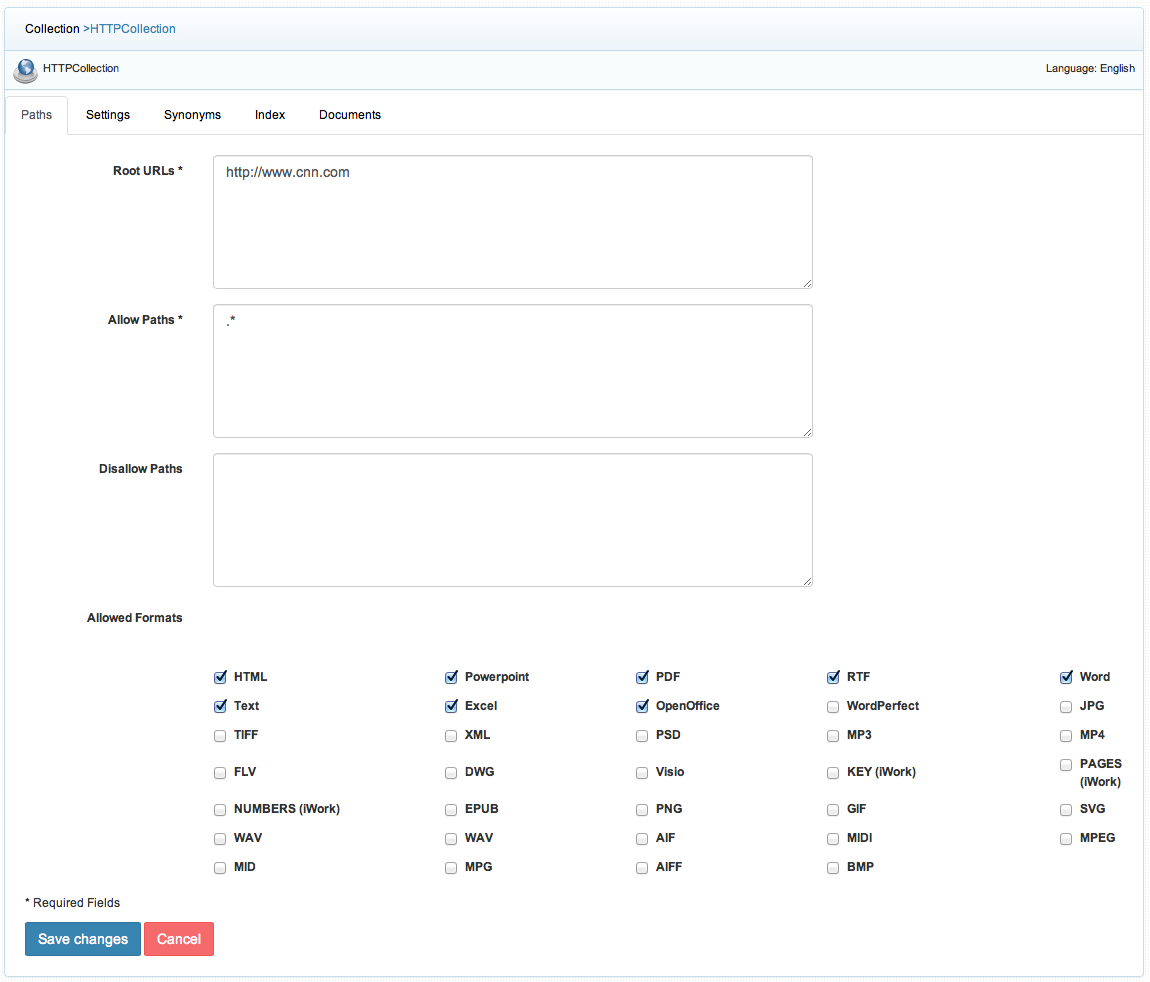

Dynamic Content Collection Paths

The HTTP collection Paths allow you to configure the Root URLs and the Allow/Disallow paths for the crawler. To access the paths for the HTTP collection, click on the collection name in the Collections list.

Root URLs

- The root URL is the starting URL for the crawler. It requests this URL, indexes the content, and follows links from the URL.

- The root URL entered should have regular HTML HREF links that the crawler can follow.

- In the Paths sub-tab, enter at least one root URL for the Dynamic Content Collection in the Root URLs.

Allow/Disallow Paths

- Allow/Disallow paths ensure the crawler can include or exclude URLs.

- Allow and Disallow paths make it possible to manage a collection by excluding unwanted URLs.

- It is mandatory to give an allow path in Dynamic Content collection to limit the indexing within the subdomain provided in Root URLs.

| Field | Description |

|---|---|

| Root URLs | The starting URL for the crawler. You need to provide at least one root URL. |

| Allow Paths | http://www.cnn.com/ (Informs the crawler to stay within the cnn.com site.) .* (Allows the crawler to go any external URL or domain.) |

| Disallow Paths | .jsp /cgi-bin/ /videos/ ?params |

| Allowed Formats | Select the document formats that need to be searchable within the collection. |

Note

- Keep the crawler within the required domain(s).

- Enter the Root URL domain name(s) (for example cnn.com or nytimes.com) within the Allow Paths to ensure the crawler stays within the required domains.

- If .* is left as the value within the Allow Paths, then the crawler will go to any external domain and index the web pages.

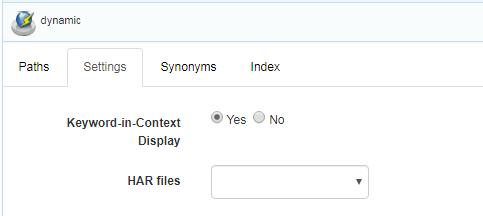

Dynamic Content Collection Settings

- Only one setting is available for this collection. Option to upload the HAR file to index the dynamically generated content.

- HAR is an HTTP archive file that can be downloaded from a dynamically generated website. This file has to be copied and pasted into the "har" folder in WEB-INF (create a new folder har, if not present).

- After the SearchBlox restart, the HAR file can be selected from Dynamic Collection settings for indexing.

| Section | Setting | Description |

|---|---|---|

| Keyword-in-Context Search Settings | Keyword-in-Context Display | The keyword-in-context returns search results with the description displayed from content areas where the search term occurs. |

| HAR files | HAR files | This HAR file that is required to fetch the URLs of the page must be selected here. Please note that it is required to copy the downloaded HAR file into ../WEB-INF/har folder |

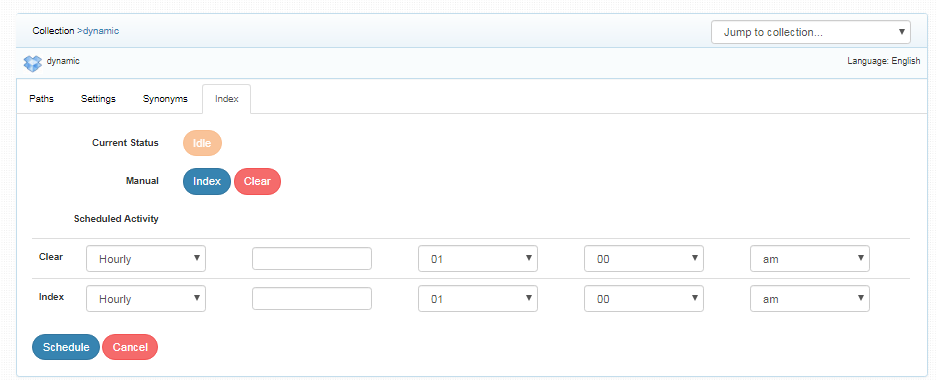

Index Activity

- A Dynamic Content Collection can be indexed or cleared on-demand, on a schedule, or through API requests.

| Index | Starts the indexer for the selected collection. Starts indexing from the root URLs. |

| Clear | Clears the current index for the selected collection. |

| Scheduled Activity | For each collection, any of the following scheduled indexer activity can be set: Index - Set the frequency and the start date/time for indexing a collection. Clear - Set the frequency and the start date/time for clearing a collection. |

- Indexing operation starts the indexer for the selected collection from the root URLs.

- On clicking Index button again, after the initial index operation, all crawled documents will be reindexed.

- If documents have been deleted from the source website or directory since the first index operation, they will be deleted from the index. New documents will also be indexed.

- The current status of a collection is always indicated on the Collection Dashboard and the Index page.

- Index operation can also be initiated from the Collection Dashboard as well as the Index sub-tab, whereas scheduling operation can be performed only from the Index sub-tab.

Best Practices

- Do not schedule the same time for two collection operations (Index, Clear).

- If you have multiple collections, always schedule the activity to index for not more than 3 collections at the same time.

Updated about 4 years ago

What’s Next